|

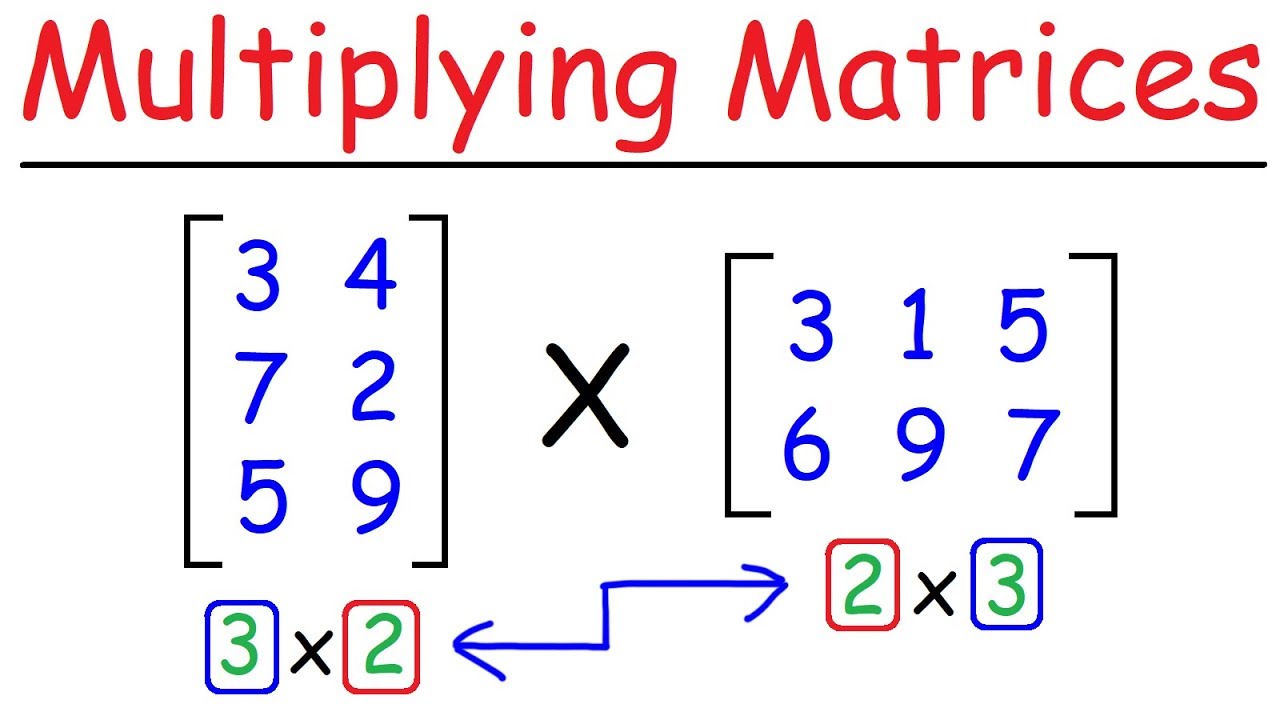

5/15/2023 0 Comments Motrix multiplication the CNR code-path must be set to AVX2 or later, or the AUTO code-path is used and the processor is an Intel processor supporting AVX2 or later instructions.?gemm, ?trsm, ?symm, and their CBLAS equivalents.Īdditionally, strict CNR is only supported under the following conditions:.When strict CNR is enabled, MKL will produce bitwise identical results, regardless of the number of threads, for the following functions:

Intel® MKL 2019 Update 3 introduces a new strict CNR mode which provides stronger reproducibility guarantees for a limited set of routines and conditions. You should be able to use (strict) CNR in Julia with MKL.jl, so someone could check, with: julia> ENV = "AVX2,STRICT" Good to know, but I suppose you get wrong answers there too, now just the same wrong answer, while always the “bitwise identical results”, so you wouldn’t notice. Some people would want bit-identical answer (see Java historically, an abandoned plan). If I could run some kind of isapprox, with some fixed (maybe not the default) parameters and get true.

I can expect and am willing to accept slightly different answers (and thus not all results accurate) across systems, as long as they do not diverge a lot, for some definition of “a lot”. Then on FMA hardware, you could run with and without and compare, at least occasionally, for a sanity check (is there an easy way to disable it on such hardware? julia -cpu-target=sandybridge seems to do it but likely only for everything Julia, except for BLAS “-fma” and “-fma4” options for -cpu-target didn’t work while seemingly should have). Getting two different can actually be a good thing. An easy apparent “fix” would be to just not use FMA there, then this wouldn’t have happened, slight or big differences, nor two different answers. But let’s just concentrate first on BLAS only. I still think FMA is dangerous (if you don’t know what you’re doing, as with all floating-point, which is a wicked can-of worms), while useful. If it were say only -3.583133801120832e-16 from OP, rather than the correct exact 0.0, I wouldn’t worry much.Īnd it’s not simply that you get two different answers, I can understand that, now. That’s not a very satisfying answer (though I upvoted), i.e. Sometimes it will give more accurate results than non-fused operations, sometimes (probably less often) it will happen to give less accurate results I don’t think that the conclusion here is that FMA is “dangerous”. Is there a way to fix BLAS to avoid FMA when dangerous? My guess they keep it in order to keep the hack of forcing code path for AMD CPU’s. It seems that MATLAB is using relatively old version of MKL. I actually think Julia should have a switch to chose this mode on MKL.jl. It comes with the price of performance (Though when comparing to Julia it seems to be negligible if at all), but it means you get the same result every time on MATLAB.

'Intel(R) Math Kernel Library Version 2019.0.3 Product Build 20190125 for Intel(R) 64 architecture applications, CNR branch AVX2' You may see it by running version -blas on MATLAB: > version -blas You may read about it at Introduction to Conditional Numerical Reproducibility (CNR). That’s too be expected across languages using these dependencies, such as Python and MATLAB.Īctually MATLAB, as opposed to Julia, is using the CNR branch of MKL. “muladd”), and not just the default BLAS, i.e. Across machines, you “might get non-deterministic results”, for “BLAS or LAPACK operations” (also for e.g.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed